Something real is happening in financial services, and it's moving faster than most people expected.

For years, "AI in banking" meant chatbots that couldn't answer your question and fraud alerts that flagged your own grocery run. That era is basically over. What's replacing it are AI agents — systems that can reason through a problem, pull data from multiple sources, draft outputs, and hand things off to a human for final sign-off. Less parlor trick, more junior analyst.

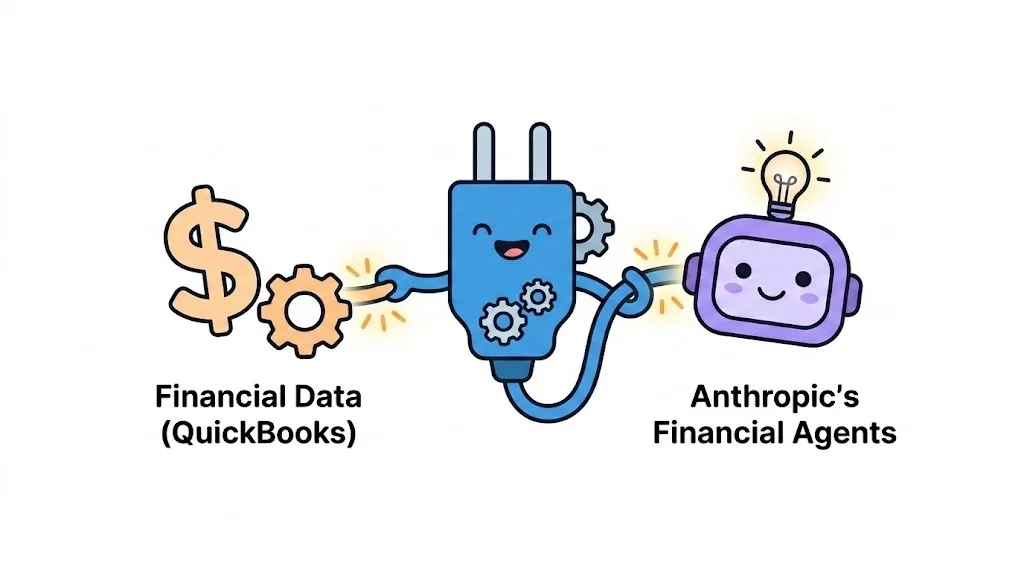

Anthropic recently released ten financial agent templates covering the kinds of work that eat up analyst hours: building pitchbooks, reviewing earnings transcripts, running KYC checks, closing the books at month-end. Claude's models currently lead the Vals AI Finance Agent benchmark at over 64%, which matters because finance isn't just about generating text — it's about reasoning correctly under pressure with incomplete information.

What these agents actually do

The templates split roughly into two camps.

On the research side, there's an agent that drafts pitchbooks and builds comparable company lists, one that preps meeting briefs before client calls, another that reads earnings transcripts and flags anything that might change your thesis. There's even a model-builder that pulls from data feeds and public filings to construct financial models — the kind of task that used to mean a late night for a first-year analyst.

On the operations side, it gets arguably more interesting. A KYC Screener that reviews source documents and escalates edge cases to compliance. A Month-End Closer that prepares journal entries and variance reports. A General Ledger Reconciler that calculates NAV. A Statement Auditor that checks financial statements ahead of audit season.

None of these replace the humans doing the work. But they do compress a four-hour task into something that takes minutes.

The part nobody talks about: deployment

Here's where a lot of these rollouts quietly fail.

The standard playbook involves sending in what Anthropic calls "Forward-Deployed Engineers" — specialists who embed inside the bank, get to know the systems, and co-design the agents from the ground up. It works well, until it doesn't. The engineers leave. The institutional knowledge leaves with them. Nobody internally knows how to modify the workflows, audit what the agent is doing, or adapt when something breaks.

Gartner estimates that by 2028, 70% of enterprises will walk away from AI implementations built this way — not because the AI failed, but because the dependency became unsustainable. What you built isn't a capability. It's a temporary project.

That's the real challenge, and it's why integration platforms like Engini.ai exist. Rather than requiring embedded engineering teams for every deployment, Engini connects Anthropic's models directly to over a thousand enterprise applications using pre-built skills and a policy-aware orchestration layer — so institutions can actually manage what they've deployed after the consultants go home.

What it looks like when it works

FIS — which quietly underpins a huge chunk of global financial infrastructure — has built a Financial Crimes AI Agent on Claude. Banks like BMO and Amalgamated Bank are using it to run AML investigations. What used to take hours now takes minutes. The agent gathers evidence across systems, weighs it against known fraud patterns, and surfaces the highest-risk cases for a human investigator to review. Every conclusion it draws links back to auditable source data.

That last part is important. Auditability isn't a nice-to-have in a regulated environment — it's the whole ballgame.

The rule that doesn't change

No matter how capable these agents get, the accountability stays human. Anthropic's deployment policies require human-in-the-loop checkpoints for anything compliance-sensitive or client-facing. The Month-End Closer can draft every journal entry and flag every variance, but a controller has to review and approve before anything hits the ledger.

This isn't a limitation to work around. It's the right design. AI surfaces the work; humans own the outcome.

The institutions that figure this out — that treat these agents as tools for amplifying judgment rather than replacing it — are the ones that will actually build something durable. The ones chasing headcount reduction as the primary goal tend to create new risks faster than they eliminate old ones.

The technology is genuinely ready. The question now is whether the deployment infrastructure, the governance, and the internal will to manage it correctly can keep up.

Frequently Asked Questions

What is a forward-deployed engineer (FDE)?

An FDE is an Anthropic specialist who embeds inside client teams to co-design agents. Engini reduces long-term dependency on FDEs by providing native tools and pre-built skills your team can manage independently after initial deployment.

Does this reduce the need for human oversight?

No. Both Anthropic and Engini require human-in-the-loop checkpoints for all compliance-sensitive and client-facing outputs. As Andrew Altfest states: “You are responsible for the work you are doing.”

How do agent templates plug into existing banking systems?

They connect via desktop plugins or Managed Agent cookbooks using data connectors and MCP servers. Plugin deployment runs alongside analysts in Claude Cowork, while Managed Agent cookbooks run autonomously on the Claude Platform with per-tool permissions and managed credential vaults. Engini adds native integrations with over 1,000 enterprise applications, reducing implementation time significantly.